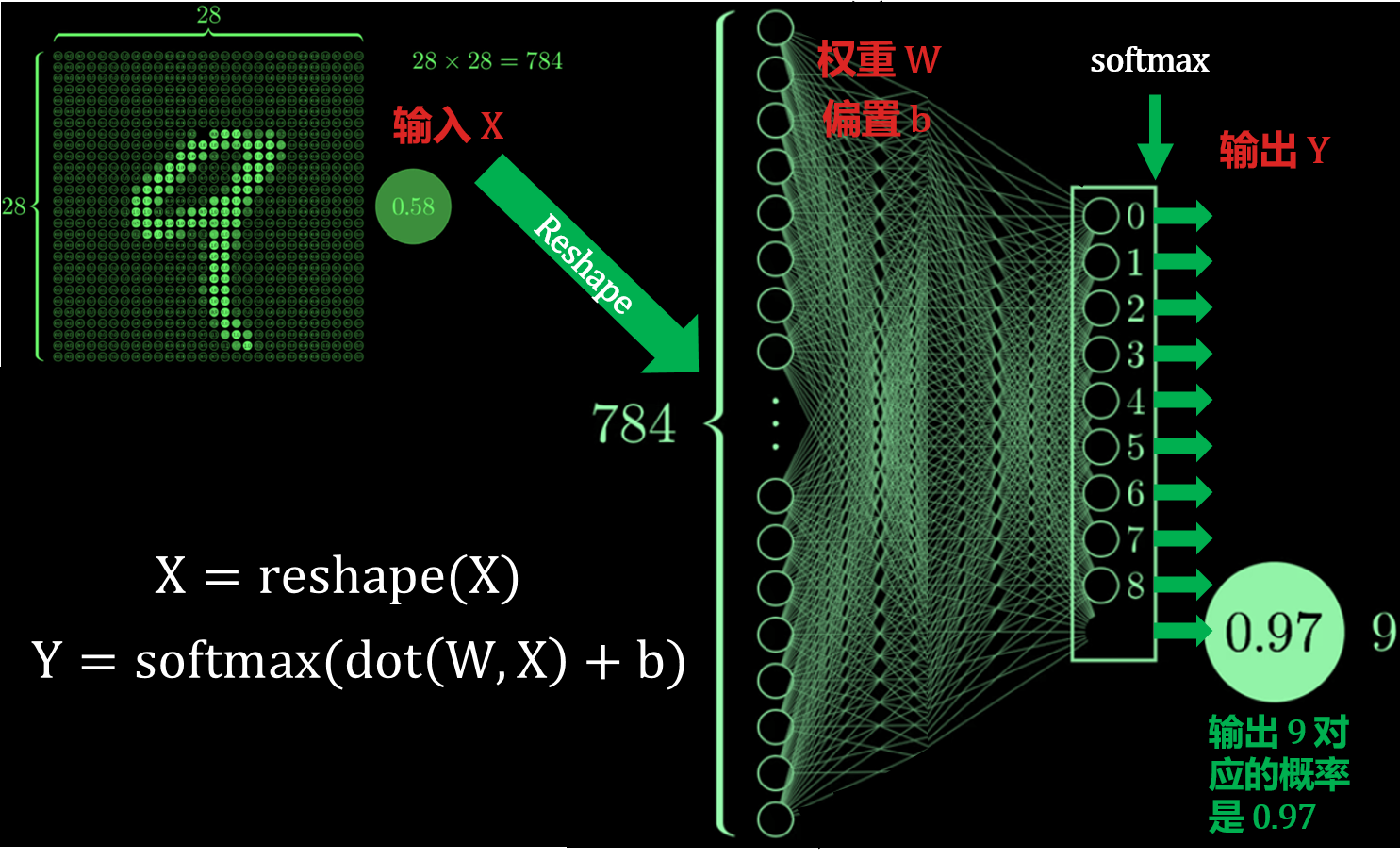

结合深度学习神经网络,

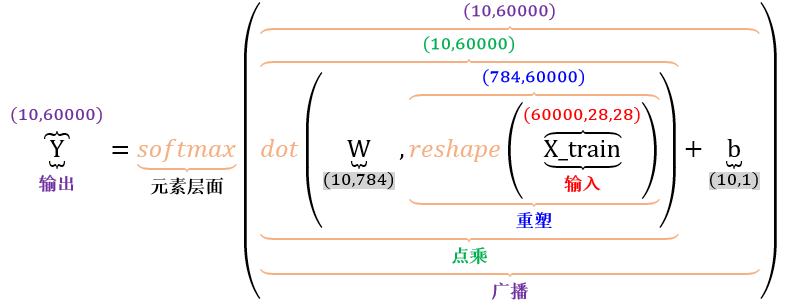

复杂的公式里面涉及到四类张量运算,从里到外按顺序来看:

- 重塑形状 (reshape)

- 张量点乘 (tensor dot)

- 广播机制 (boardcasting)

- 元素层面 (element-wise)

具体文章见我“王的机器”公众号里的 张量 101

import numpy as np

import tensorflow as tf

import tensorflow.keras as keras

from tensorflow.keras.datasets import mnist

from tensorflow.keras import backend as K

from IPython.display import Image

print(tf.__version__)

print(tf.keras.__version__)

Image("张量 101/NN.png", width=800, height=800)

Image("张量 101/NN computation.PNG", width=800, height=800)

复杂的公式里面涉及到四类张量运算,从里到外按顺序来看:

- 重塑形状 (reshape)

- 张量点乘 (tensor dot)

- 广播机制 (boardcasting)

- 元素层面 (element-wise)

重塑形状 (reshape)¶

Image("张量 101/reshape.png", width=800, height=800)

reshape 的例子¶

x = np.array( [[0, 1], [2, 3], [4, 5]] )

print(x.shape)

x

x = x.reshape( 6, 1 )

print(x.shape)

x

x = x.reshape( 2, -1 )

print(x.shape)

x

张量点乘 (tensor dot)¶

x = np.array( [1, 2, 3] )

y = np.array( [3, 2, 1] )

z = np.dot(x,y)

print(z.shape)

z

x = np.array( [1, 2, 3] )

y = np.array( [[3, 2, 1], [1, 1, 1]] )

z = np.dot(y,x)

print(z.shape)

z

x = np.array( [[1, 2, 3], [1, 2, 3], [1, 2, 3]] )

y = np.array( [[3, 2, 1], [1, 1, 1]] )

z = np.dot(y,x)

print(z.shape)

z

x = np.ones( shape=(2, 3, 4) )

y = np.array( [1, 2, 3, 4] )

z = np.dot(x,y)

print(z.shape)

z

x = np.random.normal( 0, 1, size=(2, 3, 4) )

y = np.random.normal( 0, 1, size=(4, 2) )

z = np.dot(x,y)

print(z.shape)

z

广播机制 (boardcasting)¶

Image("张量 101/boardcasting.png", width=800, height=800)

boardcasting 的例子¶

x = np.arange(1,4).reshape(3,1)

y = np.arange(1,3).reshape(1,2)

print( (x + y).shape )

x + y

x = np.arange(1,7).reshape(3,2)

y = np.arange(1,3).reshape(2)

print( (x + y).shape )

x + y

x = np.arange(1,25).reshape(2,3,4)

y = np.arange(1,5).reshape(4)

print( (x + y).shape )

x + y

x = np.arange(1,25).reshape(2,3,4)

y = np.arange(1,13).reshape(3,4)

print( (x + y).shape )

x + y

元素层面 (element-wise)¶

x = np.random.normal( 0, 1, size=(2,3) )

y = np.random.normal( 0, 1, size=(2,3) )

x

y

x + y

x - y

x * y

x / y

np.exp(x)

def softmax(x, axis=-1):

e_x = np.exp(x - np.max(x,axis,keepdims=True))

return e_x / e_x.sum(axis,keepdims=True)

y = softmax( x, axis=0 )

y

np.sum( y, axis=0 )

y = softmax( x, axis=1 )

y

np.sum( y, axis=1 )

回到 MNIST 的例子¶

# input image dimensions and class dimensions

n_W, n_H = 28, 28

n_y = 10

# the data, shuffled and split between train and test sets

(x_train, y_train), (x_test, y_test) = mnist.load_data()

x_train.shape

0. 起点¶

Image("张量 101/1.PNG", width=800, height=800)

1. 重塑¶

X = x_train.reshape( x_train.shape[0], -1 ).T

X.shape

Image("张量 101/2.PNG", width=800, height=800)

2. 点乘¶

W = np.random.normal( 0, 1, size=(n_y, X.shape[0]) )

b = np.random.normal( 0, 1, size=(n_y, 1) )

print( W.shape )

print( b.shape )

WX = np.dot(W,X)

WX.shape

Image("张量 101/3.PNG", width=800, height=800)

3. 广播¶

Z = WX + b

Z.shape

Image("张量 101/4.PNG", width=800, height=800)

4. 元素层面¶

tensor_y = tf.nn.softmax( Z, axis=0 )

y = keras.backend.eval(tensor_y)

y.shape

np.sum( y, axis=0 )

Image("张量 101/5.PNG", width=800, height=800)

总结¶

Image("张量 101/6.PNG", width=800, height=800)